Explainable CBR

Case-based Reasoning for the explanation of intelligent systems.

About Explainable CBR

The goal of Explainable Artificial Intelligence (XAI) is "to create a suite of new or modified machine learning techniques that produce explainable models that, when combined with effective explanation techniques, enable end users to understand, appropriately trust, and effectively manage the emerging generation of Artificial Intelligence (AI) systems".

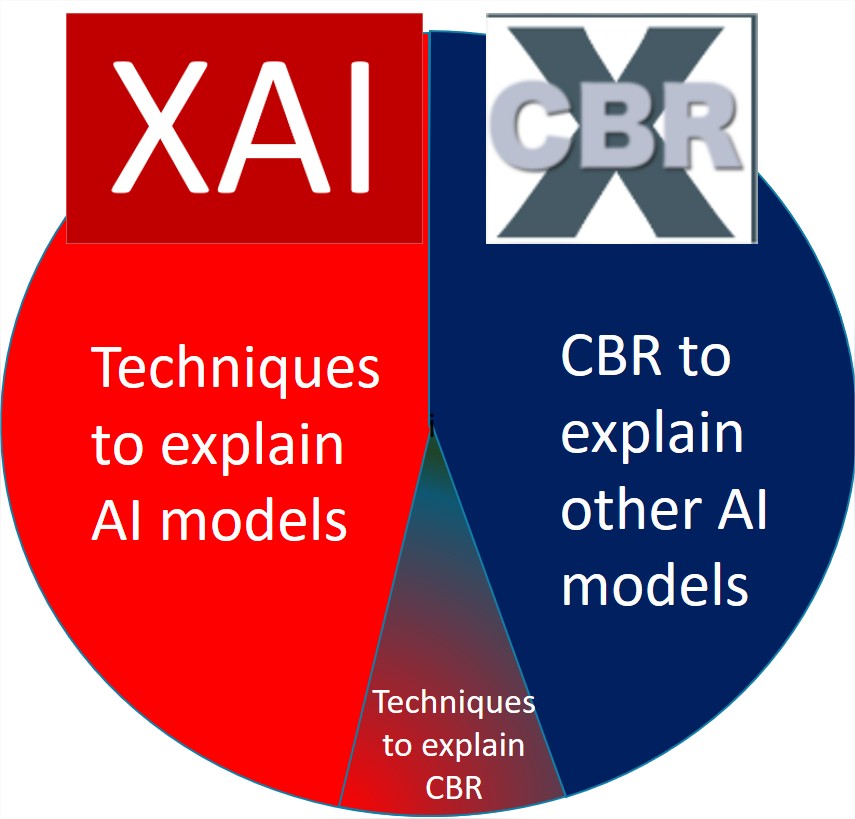

XCBR refers to two lines of work:

- The use of Case-based Reasoning (CBR) methods as the explanation tecnique to open the black box of other AI techniques like Neural Networks, SVM, genetic algorithms:

- Use of experience and memory-based techniques to generate explanations. Retrieve and reuse human explanations.

- Use of twin systems where the CBR module duplicate or collaborate with the black box AI model. CBR as a second opinion reasoning system in a different reasoning process.

- Research to explain complex CBR reasoning processes:

- Explain similarity measures/ adaptation processes.

- Knowledge intensive Explanations based on external resources.

- Explaining recommender systems.

xCOLIBRI

xCOLIBRI is an evolution of the COLIBRI plaform focused on the application of CBR for the explanation of intelligent Systems.

From the CBR perspective, research in XAI has pointed out the importance of taking advantage of the human knowledge to generate and evaluate explanations. Therefore, we have created a version of COLIBRI focused on XAI that supports the development of Case-Based Explanation systems. It provides several implementations -either in Java, Python or JavaScript- that can be integrated into existing AI systems to enhance their explainability.

CBRex

Case-based Reasoning for the explanation of intelligent systems.

TIN2017-87330-R 2018-2021

The main goal of this project is the research in techniques to explain artificial intelligent systems in order to increase the transparency and reliability in these systems.

The main contribution of the project to the XAI is the use of Case-based Reasoning (CBR) methods for the inclusion of explanations to several AI techniques using reasoning-by-example. CBR systems have previous experiences in interactive explanations and in exploiting memory-based techniques to generate these explanations. The memory of previous facts and decisions will be the main technique in this project to explain the reasoning behind some AI systems. More precisely, this project will delve into generic explanation techniques, which would be extensible to different domains, symbolic and subsymbolic AI techniques and personalized explanations.

PERXAI

Personalized Explainable Artificial Intelligence from experiential knowledge .

PID2020-114596RB-C21 2021-2023

The area of research in Explained Artificial Intelligence (XAI) has experienced a considerable boom in recent years, generating a wide interest in both institutions and companies as well as in the academic field. In the last few years, the need to understand the reasons that lead an AI system to reach a conclusion, make a prediction, a reasoning, a recommendation or a decision has increased and in this way users trust the AI system. In this context, numerous XAI techniques have emerged and are applied in a growing number of practical areas.

The PERXAI project is proposed as an extension of the results of our previous CBRex project where we have investigated the application of Case Based Reasoning (CBR) as a subrogated technique to explain AI algorithms. This project has clearly identified the need to approach AI processes that can be explained from a much broader perspective than the XAI algorithm itself, including in this process the data used by the intelligent system and the way in which this explanation is presented -displayed- to the user, where interactivity plays a very important role. Additionally, for the explanation processes to be effective, the need for personalization to the user to whom the explanation is addressed must be taken into account. This process of generating complete and personalized explanations is inherently very complex to develop. However, from the experience of the CBRex project it can be concluded that the explanation processes follow a series of common patterns that can be abstracted from different use cases and reused between different domains.

Thus, the main objective of this project is to build a catalogue of comprehensive explanation strategies that can be captured from the user experience in different domains and applications and can be abstracted, formalized and reused for other domains, applications and users following a CBR approach complemented by an ontology-based semantic tagging that facilitates their transfer to other contexts.

AUDITIA-X

Auditing of AI systems through explainability (AUDITIA-X).

PID2023-150566OB-I00 2023-2026

The impact of Artificial Intelligence (AI) in value chains and social missions or challenges is unquestionable. However, the adoption of this technology is not exempt from risks derived from possible biases and inappropriate uses. These risks have been highlighted by the Digital Spain 2025 Plan and recently regulated by the European Union. This project proposes the development of mechanisms that allow the analysis and evaluation of predictive AI systems for the identification of the different biases and risks, and their adaptation to current and future regulatory and ethical frameworks.

The project is rooted in the existing results in the area of eXplicable AI (XAI) for the development of methods that provide transparency to AI systems, specifically those based on machine learning (ML) models. However, it is only very recently - and as a result of emerging regulations- that the concept of explainability has started to be linked to auditing, even though they both have obvious synergies. The appropriate combination of XAI methods, with data analysis and visualization techniques, and complex machine learning model generation processes, can lead to prototypical procedures that allow to comprehensively analyze and evaluate the behavior of ML models.

The main challenge is to address the heterogeneous nature of AI systems and application domains, which makes it difficult to mechanize the auditing and governance procedure of a specific model for a specific domain and dataset. Therefore, the development of generic or theoretical methodologies for the audit of AI systems entails the inherent difficulty of its concrete instantiation to the specific features of such systems, and its adequacy to regulatory and even ethical guidelines where the variability is too high. This project proposes an alternative solution based on the collection of practical and validated use cases that can be reused in auditing similar AI systems. For this purpose, the Case-based Reasoning (CBR) paradigm would be applied, based on the reuse of similar experiences; a field where the applicant research group is a reference and has an experience of more than 20 years.

The development of this project involves the generation of knowledge in different fields that will be of direct benefit to meet the challenge of the implementation of AI in society. On the one hand, concrete results of evaluation and analysis of the risks of AI systems applied to specific problems in high-impact domains such as medicine, cybersecurity, and environmental intelligence, among others, will be developed. On the other hand, a reusable catalog of audit experiences will be made available to the scientific, industrial, and regulatory community to serve as a reference for evaluating and analyzing further AI systems.

People in Explainable CBR

Juan A. Recio García

Belén Díaz Agudo

Pedro Antonio González Calero

Guillermo Jiménez Díaz

Antonio A. Sánchez Ruiz-Granados

Jose Luis Jorro-Aragoneses

Marta Caro-Martínez